I’ve seen a great deal of discussion online recently about AI vibe coding. The discussion is interesting not only from a technical perspective, but because it raises questions about judgement, risk and reasoning that are very familiar in legal practice.

Before coming to the Bar, I worked as a technology consultant, where much of my formative professional experience was in system design, development and integration. From that perspective, it is genuinely encouraging to see so many people experimenting with code, including by vibe coding. Anything that lowers the barrier to entry clearly has value. Even now, as a barrister, I still encourage people on occasion to open a Python interpreter, write a few scripts and see what happens.

Exposure to coding, even at a modest level, develops a particular way of thinking: breaking problems down, making assumptions explicit and reasoning about what follows if those assumptions change. Those habits are valuable in many fields, though they map especially well onto legal practice.

If I have any unease with vibe coding, it is not necessarily about the tools themselves, nor their use as a gateway to coding. It is about what can silently fall away when abstraction becomes very good.

When the focus is purely on whether the output appears to work, there is little pressure to think about how it works – or whether it will continue to work as conditions change. That question, “Will this still work if something shifts?”, is central both to technology and the practice of law.

Like legal reasoning, the art of good software development is constrained by certain underlying realities.

For example, at scale it is often cheaper to store results than to recompute them. An application serving an enterprise should not usually recalculate the same totals every time a user opens a report. Instead, the result is computed once, stored and recalculated only when the underlying data changes.

Similarly, when searching data, brute-force approaches do not scale. Iterating through every record to ask “Is this what I’m looking for?” works for small datasets, though not for large ones.

How data is structured – whether it is grouped, indexed or partitioned – determines how efficiently it can be queried. If a user asks a system to identify expenditure in a particular year, the sensible starting point is the records for that year, not the entire dataset. The same is true in court: if a judge asks counsel the same question, the sensible starting point is the material for that year, not everything that happens to exist.

None of these ideas are novel. However, each requires you to pause, model the problem and consider the trade-offs. Unless those principles are already understood or are deliberately surfaced and interrogated, vibe-coded solutions tend to bypass this analysis in favour of whatever produces a plausible end result most quickly.

That shortcut matters, because it discourages a habit that is critical in both software development and legal practice: asking not merely whether something works, but whether it works for the right reasons and whether it will fail gracefully when circumstances change.

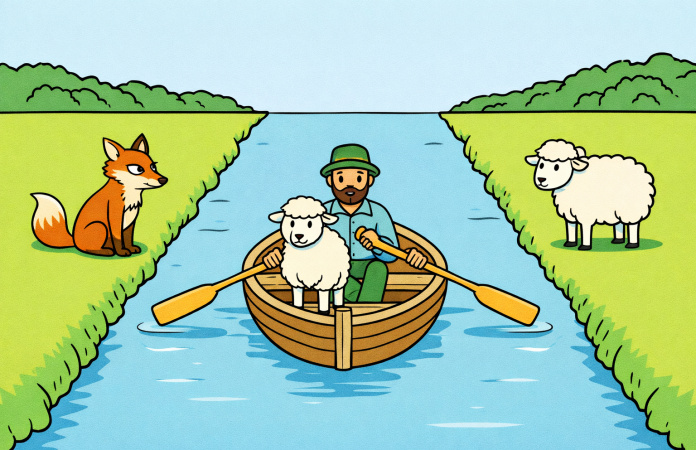

Many of us will remember school exercises involving transporting animals across a river with a rowing boat, where certain combinations mustn’t be left unattended. The exercise was never really about sheep or foxes. It was about logic, sequencing and identifying constraints. Computing, like the law, asks the same questions – just with more variables and higher stakes. Vibe coding risks focusing on whether the objective has been reached, without sufficient attention to what happens along the way.

A further limitation of vibe coding is its difficulty in grappling with more serious technological requirements, particularly around security and data protection. User interface controls, such as login screens, are relatively easy to generate, and a competent AI-driven development tool will create a convincing one with ease. However, they are not, by themselves, security boundaries.

Proper protection requires thinking about where data is stored, who can access it, with what authentication factors, and how it can be reached both intentionally and accidentally. Sensitive data should not reside in publicly accessible locations, for example, regardless of whether a login page ostensibly guards it. In some cases, encryption will be appropriate, though encryption is computationally expensive, which introduces further trade-offs between security, performance and usability.

Working through those questions – what genuinely needs to be encrypted, what can safely remain unencrypted, what might form part of a non-sensitive index – requires judgement. These decisions depend on context, proportionality and risk tolerance. They are difficult to automate away because they are not merely technical choices; they are evaluative ones.

Those evaluative skills are, of course, central to legal practice. Lawyers routinely assess whether a safeguard is proportionate, whether a risk is real or theoretical and whether an argument will hold up when placed under stress. The mental discipline required is strikingly similar.

Seen in that light, debates about vibe coding are less about tools and more about how we reason through abstraction. For me, the most productive way to approach vibe coding is therefore to treat it as a starting point rather than a destination. It’s a way to explore ideas quickly, though not a substitute for understanding.

If you are thinking of experimenting with vibe coding, or indeed with any other coding technique, a few principles and ideas are worth keeping in mind:

1. Be explicit about data and scale

Ask what happens when a dataset grows, when usage patterns change or when the system is placed under sustained load. Where is computation happening and what could sensibly be calculated once and reused?

Anyone who has stood before a busy judge with a substantial bundle and discovered, midway through their submissions, that the decisive document is technically “there” but practically inaccessible will recognise the cost of scale without structure.

2. Assume security is your responsibility

Interfaces are not security boundaries. If the data matters, whether commercially, legally or otherwise, you need to understand how it is stored, accessed and protected.

Much like confidentiality obligations, labels matter far less in litigation than the practical reality of who can see what, when and how.

3. Treat efficiency as a reasoning exercise, not just an optimisation phase

Efficiency is not just about shaving milliseconds off a procedure; it is about clarity of thought and disciplined problem-framing. For example, whether a system should traverse an entire dataset by default, or narrow the problem first by excluding information that cannot affect the outcome.

As with advocacy, the point is not to say everything that could be said, but to reach the decisive issue directly and without unnecessary detours.

4. Ask how and where things will fail

Every system fails eventually. The important question is whether failures are predictable, intelligible, contained and handled elegantly – or surprising and catastrophic.

As is also true when advising on contractual or litigation risk, the question is rarely whether something can go wrong. Rather, it is whether the consequences are understood, allocated and survivable.

5. Pop the hood early

Once something works, read the code, change it and break it. Understanding emerges far more reliably from inspection than from trust. On closer inspection, it may become clear that code which appears to work is not yet suitable for production environments or public release.

As with any apparently neat legal submission, it is usually better to test how it behaves under pressure than to assume it will survive first contact with your opponent.

Vibe coding can be an excellent way to spark ideas. Understanding how systems actually behave, particularly when they are stressed, scaled or misused, is what allows those ideas to be relied upon. In technology, as in law, that understanding is rarely optional.